to ROAS or not to ROAS

When you measure the wrong metric, you set yourself up to fail.

While consulting on Meta ads, I obsess over the right key metrics to determine a campaign's success. Return On Ad Spend (ROAS) is the most common metric people prefer: $100 in ad spend with 200% ROAS gives you $200 in sales. Excellent. Makes sense. Are we done here?

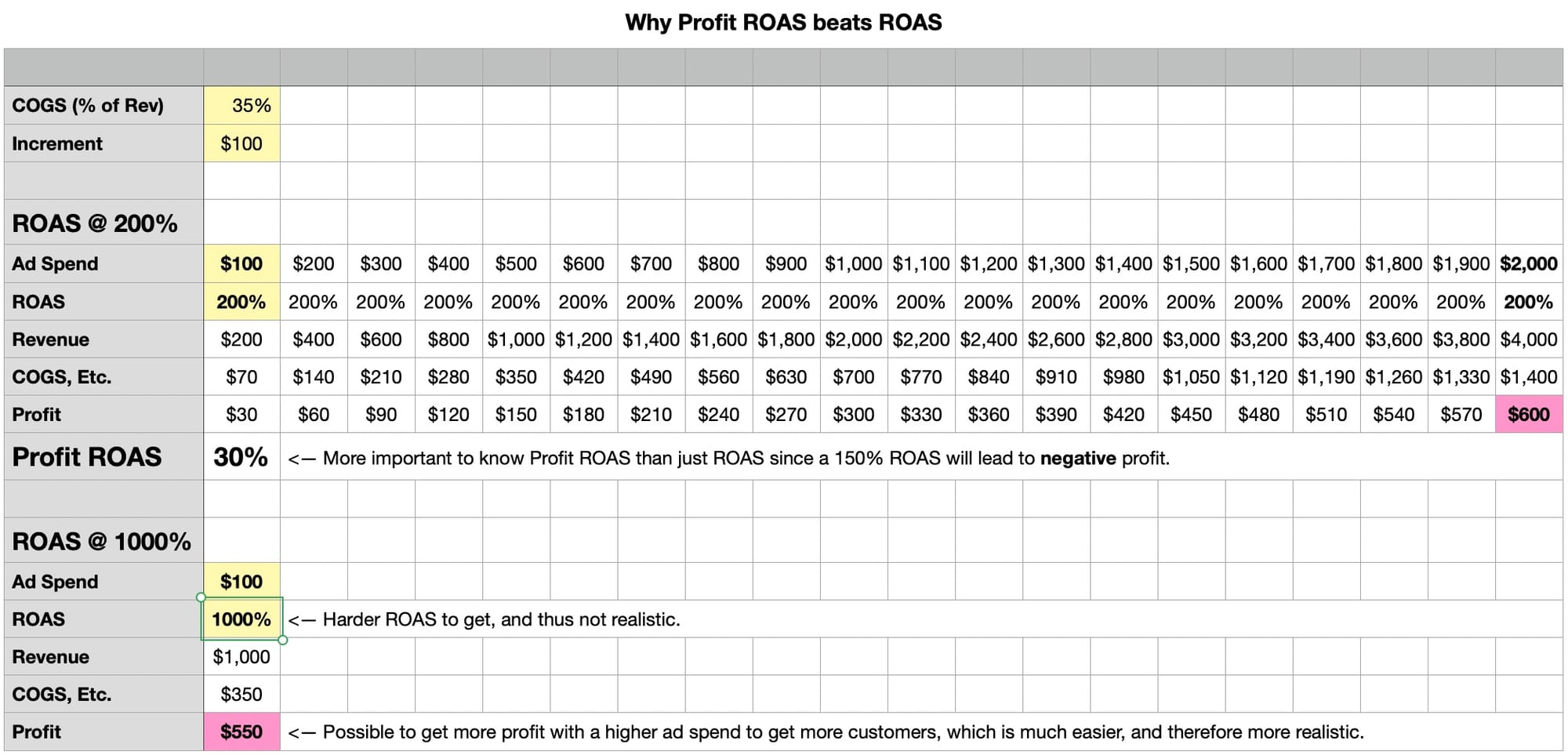

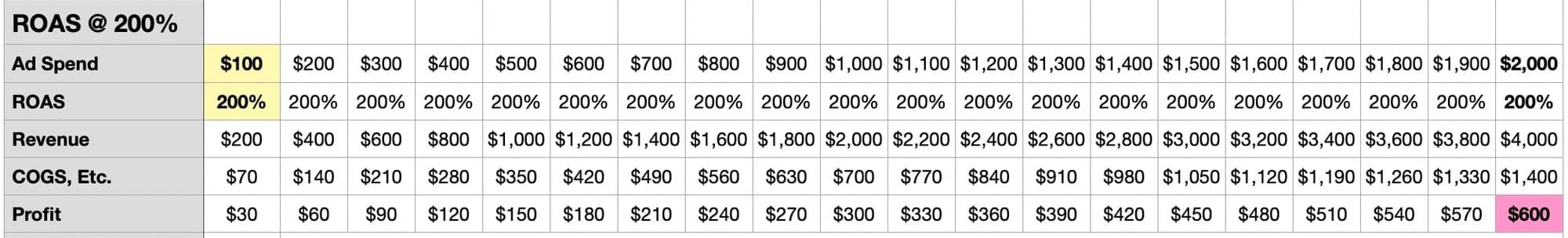

Nope. You can't isolate ROAS as the only metric to track. What if your Cost of Goods Sold (COGS) happens to be $100? Then your ROAS might still be 200%, but your profits would be zero.

Thus, while ROAS is a useful metric, the more important metric is Profit ROAS: assuming COGS are 40% of sales, then $200 x 40% is $80, and profits would be $20 ($200 revenue - $100 ad spend - $80 COGS = $20 profit).

It's always tempting to project a crazy high ROAS, but is that ever really achievable? If we had a 10X ROAS (1000%), we'd surely make lots of profits, but few people ever do. So it is always better to have a reasonable Profit ROAS and take its steady marginal wins even if the Profit ROAS is still small.

The better bet is to scale up to more customers (meaning more ad spend) to make more profits. Using the same example, $2000 of ad spend at 200% ROAS leads to a reasonable 30% Profit ROAS, and lets you make more profit than $100 of ad spend with astronomical 10X ROAS.

Here's a spreadsheet that proves out the math: